Restaurant operators with high-traffic service windows and limited IT staffing

Restaurant IT & Security

Restaurants & Food Service

We help restaurants and food-service operators reduce peak-hour disruptions, harden payment-adjacent systems, and run incident response with clear ownership.

Industry detail

Ideal business profile

The kinds of teams and environments this page is built for.

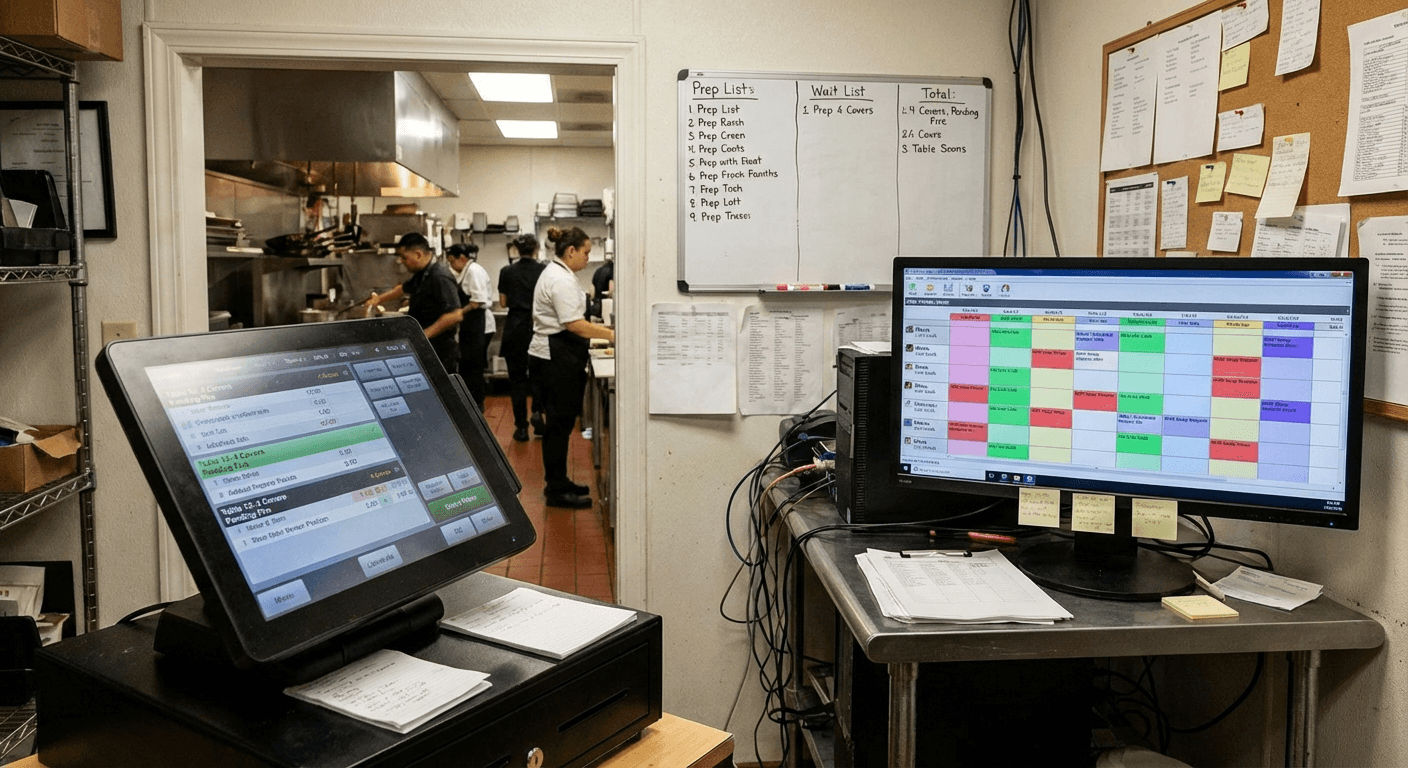

Teams relying on POS, ordering, scheduling, and networked back-of-house systems

Businesses needing stronger outage and incident recovery discipline

Leaders seeking practical controls that do not slow daily operations

Industry detail

Operational environment

What day-to-day operations usually look like in this vertical.

POS and ordering continuity is tightly coupled to front-of-house throughput

Guest Wi-Fi and internal operations networks often share risky boundary assumptions

Staff turnover can create access-governance and credential hygiene drift

Multiple delivery and ordering integrations increase dependency complexity

Service-time incidents require immediate triage and clear fallback procedures

Industry detail

Typical system dependencies

How daily work, handoffs, and technical dependencies usually line up.

Systems and handoffs that shape support priorities

Use this view to connect technical recommendations to the way the team actually works.

Industry detail

Core pain points

The recurring issues that usually create stress, exposure, or operational drag.

Peak-hour POS outages or slowdowns that affect revenue and customer experience

Network instability between front-of-house and back-of-house systems

Inconsistent security controls around payment-adjacent workflows

Escalation delays when third-party integrations fail

Limited bandwidth for proactive remediation and resilience work

Industry detail

Risk realities

Where threats and fragility tend to concentrate for this type of organization.

Payment and ordering disruptions can cascade into full-service bottlenecks

Weak network segmentation expands blast radius during security events

Account and endpoint drift increases avoidable incident frequency

Incident response quality declines when shift-based ownership is unclear

Industry detail

Compliance context

Frameworks and regulatory obligations that shape priorities in this sector.

PCI DSS

Payment-related environments require disciplined control and operational guardrails.

How Blueforce applies it: We reduce payment-adjacent exposure through segmentation, endpoint standards, and process control improvements.

FTC Data Security Expectations

Businesses are expected to implement reasonable safeguards and respond effectively to incidents.

How Blueforce applies it: We implement practical controls, incident playbooks, and ownership models aligned to day-to-day operations.

CISA Small Business Cyber Guidance

Foundational controls and readiness practices are critical for continuity-sensitive SMB operations.

How Blueforce applies it: We sequence risk reduction by service impact and implementation feasibility.

Industry detail

Service mapping

How Blueforce services map into the actual needs of this environment.

Primary track

Managed IT & Cybersecurity Foundation

Keep service windows stable by improving uptime support, segmentation, and response readiness.

- • Peak-hour incident routing and continuity support model

- • Network and endpoint hardening across restaurant operations

- • Access-control governance for shift-based staffing environments

- • Outage and incident runbooks for POS and ordering dependencies

Expansion track

AI & Context Operations Expansion

Improve operational throughput for internal coordination once core systems are stable.

- • Context-aware triage for support and operational requests

- • Automation for repetitive service-operations updates and handoffs

- • Operational visibility dashboards for incident and uptime trends

- • Governed rollout of low-risk workflow automation

Industry detail

30 / 60 / 90 roadmap

A practical phased sequence for stabilizing the environment.

Days 1-30

Stabilize high-impact service dependencies.

- • Map POS, network, and ordering dependency pathways

- • Define incident ownership by shift and leadership tier

- • Address highest-priority endpoint and access weaknesses

- • Establish service-window escalation communication standards

Days 31-60

Harden operations and normalize response discipline.

- • Standardize remediation cadence and exception handling

- • Implement segmentation and role-based access refinements

- • Run service-time outage and response drills

- • Tighten third-party integration escalation playbooks

Days 61-90

Scale predictable uptime and execution quality.

- • Tune support queues and incident handoff quality

- • Introduce targeted automation for repetitive coordination tasks

- • Formalize recurring risk and continuity reviews

- • Refresh roadmap based on real incident and service patterns

Industry detail

Priority support path

The work needs clear ownership, timing, and follow-through.

Support rhythm that matches the team

Each phase needs ownership, sequencing, and a support model that fits the way work gets done.

Industry detail

Priority controls checklist

The controls and process disciplines most often worth addressing first.

Prioritize critical systems by peak-hour operational impact

Segment payment-adjacent and non-critical network zones

Standardize endpoint and credential controls for shift operations

Document outage fallback workflows with role ownership

Track unresolved high-risk remediation and aging

Validate backup and restoration readiness for key systems

Establish vendor escalation timing and responsibilities

Review continuity and risk indicators in a recurring cadence

Industry detail

Scenario playbooks

Examples of the kinds of situations this environment needs to be ready for.

POS Outage During Peak Service

Trigger: Point-of-sale services fail or degrade during high-traffic hours.

First response: Activate continuity process, route priority escalation, and communicate temporary operating procedure to staff.

Stabilization: Restore core workflows, reconcile impacted transactions, and harden recurrence controls.

Ordering Integration Failure

Trigger: Third-party ordering platform disruption causes order-routing inconsistencies.

First response: Contain affected integration path, assign owner for vendor escalation, and enforce fallback order workflow.

Stabilization: Reconcile order records, validate service integrity, and update integration monitoring controls.

Suspicious Access Activity in Operations Systems

Trigger: Identity or endpoint telemetry indicates possible unauthorized use.

First response: Contain account scope, revoke risky sessions, and execute incident response communication protocol.

Stabilization: Complete remediation and hardening actions, then update runbook actions from observed gaps.

Industry detail

Frequently asked questions

Plain-English answers for common buyer questions in this vertical.

Yes. We usually start with control and workflow improvements around your existing systems, then sequence platform changes only when needed.

We design response ownership, communication paths, and access controls that fit rotating staffing models.

Yes. We standardize core controls and escalation procedures across locations while preserving site-level operational realities.

Most operators first see clearer incident routing, fewer repeated outages, and more predictable service-window support response.

Need a Restaurant Support Path?

Get a scoped implementation path aligned to peak-hour operations, payment-adjacent risk, and support reliability.